Phonely’s A/B Testing tool helps you experiment, measure, and improve your voice AI’s performance with real world data. It allows you to compare different versions of your AI agent such as Voice, workflow, conversation settings to determine which one performs best in live calls. This guide explains how A/B testing works, how to create and run a test, and how to interpret results.Documentation Index

Fetch the complete documentation index at: https://docs.phonely.ai/llms.txt

Use this file to discover all available pages before exploring further.

What is A/B Testing in Phonely?

A/B Testing lets you run controlled experiments between two versions of your AI agent:- The Base Agent (Control) - your existing setup.

- The Test Agent (Variant) - a duplicate with specific changes.

Access A/B Testing

- Go to your Phonely menu and click to open Testing .

- Choose A/B Testing from the top navigation bar.

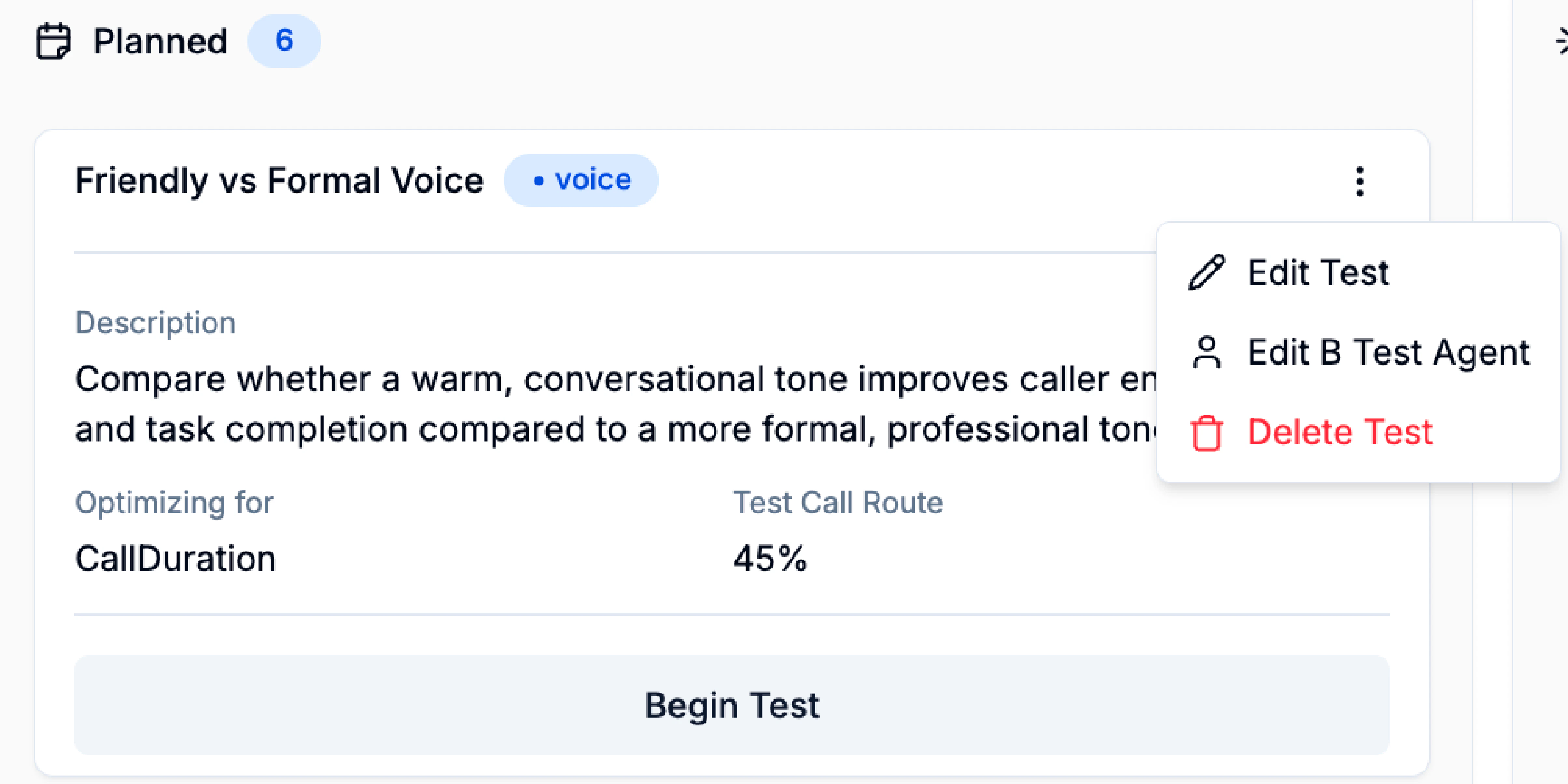

- Planned – Tests you’ve set up but haven’t started yet.

- In Progress – Tests currently running on live calls.

- Completed – Finished tests where you can review performance results.

Creating a New A/B Test

Click create a new test in the Planned section to begin. A step-by-step setup window will appear. Name and Describe Your Test Give your test a descriptive name that identifies what you’re testing.Example: “Friendly Voice vs Formal Voice – Support Line.” Add a description to explain the goal of your test.

Example: “Evaluate whether a friendly voice style improves appointment confirmations.”

Choose What You’d Like to Test

Phonely supports multiple types of tests depending on your experiment goal. You can select one of the following:| Type | What It Tests | Common Use Case |

|---|---|---|

| Voice | Compares different AI voices or tones | Test if a friendly voice leads to higher customer engagement |

| Workflow | Tests different conversation flows or logic | Compare two calls scripts or routing paths |

| Agent Settings | Evaluates settings like interruption, delay, or background noise | Find the balance between quick responses and natural flow |

| Knowledge Base | Tests different documentation sources | See which knowledge sources improve the accuracy of the answers |

| Other | For any other tests outside these categories |

Define End Criteria and Call Distribution

Here, you’ll specify how long the test should run and what share of calls should be routed to your test version.End Criteria

Choose when the test should stop automatically:- By Number of Calls: Ends after a set number of test calls. Example: Stop after 1,000 calls routed to the test version.

- By Number of Days: Runs for a fixed duration (e.g., 10 days).

- AI-Determined: Will allow Phonely to automatically decide when enough data is collected.

Call Route Percentage

Use the slider to define how much traffic is sent to the test version.- Example: Route 30% of calls to the test, and keep 70% on the base agent.

- Recommendation: Start small (20–30%) to ensure stability before scaling up.

Set Success Criteria

This step defines what “success” means for your test. You can base success on how calls end, what outcomes are tagged, or how long they last.Call Ended Reason-Based Testing

Evaluates success based on how the call ended. Use this if you care about the technical or behavioural outcome of the call. Example Use case: “We want more calls to end in transfers to the sales team.”Call Outcome-Based Testing

Evaluates based on your defined business outcomes, which you can configure inside your flow. Use case: “We want to see if the new workflow increases lead qualification rate.”Duration-Based Testing

Optimizes for call length.- Shorter Calls: Indicate more efficiency or faster resolution (ideal for support or routing).

- Longer Calls: Indicates better engagement or deeper discussions (ideal for sales).

LLM-Based Evaluation

A future option will allow Phonely’s AI to analyze transcripts and automatically evaluate call quality based on context. Once you’ve chosen and configured your success criteria, click Next.

Editing the Test Agent

After setup, Phonely automatically duplicates your base agent into a Test Agent.You’ll see a banner:

“You are editing a test agent. This agent will be used to test the new changes.”Keep all other elements identical to ensure that results reflect only the changes you made. Once done, click Continue to save your test agent.

Running the A/B Test

After your setup is complete, you’ll return to the A/B Testing dashboard.- Under the Planned section, find your new test.

- Click Begin Test to start routing calls.

- Success rate for each agent.

- Total answered calls.

- Traffic allocation.

Monitoring and Analyzing Results

You can monitor ongoing results anytime during the test.- Track success rate trends to see which variant performs better.

- Check if call allocation percentages remain balanced.

- Review call outcomes and end reasons to ensure tagging consistency.

- Performance metrics (success rate, duration, end reason distribution).

- Comparative insights between Base and Test agents.

- Which version achieved better alignment with your success criteria.

How Phonely Calculates A/B Test Results

Phonely compares the Base Agent and Test Agent using the success criteria selected when the test was created. A call counts as a success when it matches that criterion, such as the desired call outcome, end reason, or duration target. In the results view:- Control or A means the Control Agent.

- Variant or B means the Variant Agent.

- Calls means completed calls for that arm.

- Successes means completed calls that met the success criteria.

- Success rate is

successes / calls, displayed as a rounded percentage.

Success Rates

Phonely calculates each arm’s success rate as:Delta and Winner

The delta is the difference between the two displayed success rates:Two-Proportion Z-Test

Phonely uses a two-proportion z-test to estimate whether the difference between the Base Agent and Test Agent is likely to be real or just noise from a limited sample of calls. The calculation uses the raw call counts and success counts:Chance Variant Wins

The “chance variant wins” number is calculated from the z-score using the standard normal cumulative distribution function:P-Value

Phonely’s displayed p-value is a one-sided p-value for the question “is the variant better than the control?”:Confidence Interval

Phonely also estimates a 95% confidence interval for the difference between the variant and control success rates. This interval uses the unpooled standard error:How to Explain a Result Like “11% Chance Variant Wins”

When explaining a result to a user, use the test’s actual call counts and success counts, then walk through the result in this order:- State which arm is control and which arm is variant.

- Show completed calls and successes for each arm.

- Calculate each success rate as

successes / completed calls. - Compare the rates and state the delta in percentage points.

- Explain the z-score: positive favors the variant, negative favors the control.

- Explain the chance variant wins as

normalCdf(z-score). - Explain the p-value as

1 - normalCdf(z-score). - Explain the 95% confidence interval for

variant rate - control rate. - End with a plain-language interpretation, not a guarantee.

For “Greeting Message”, the variant currently has an 11% chance of beating the control. That means the observed success rates favor the control right now. The variant may still recover as more calls come in, but based on the current sample, there is not enough evidence to call the variant better.Avoid saying that a low chance variant wins means the test is permanently failed. A/B test results can change as more calls are completed, especially when the sample size is small.

Editing an A/B Test

Phonely allows you to update your A/B test at any point before or during the experiment. This is useful when you want to refine the test name, description, routing percentage, or modify the Test Agent itself. You can edit your test from the Planned or In Progress section.- Open your A/B Testing dashboard.

- Find the test you want to modify.

- Click the ⋮ menu in the top-right corner of the test card.

- Choose one of the following options:

- Test name

- Description

- What you’re testing (Voice, Workflow, Agent Settings, etc.)

- End criteria (number of calls or days)

- Call route percentage

- Success criteria

Edit B Test Agent

Selecting this option opens the Test Agent, which is the duplicate created during setup. You’ll see a banner reminding you:“You are editing a test agent. This agent will be used to test the new changes.”Only modify the specific variables you want to test. All other settings should remain identical to your Base Agent to ensure fair and reliable results. When finished, click Continue to save your changes.

Delete Test

If you want to remove a planned or completed test entirely, choose Delete Test.\\n(Tests already running cannot be deleted until they finish.When to Edit a Test

You might want to make edits when:- The description or test name needs clarification.

- You want to adjust call routing (e.g., from 30% to 45%).

- You need to modify the workflow, voice, or KB version in the Test Agent.

- You decide to extend the test duration from 1 day to 7 days.

- You want to change the success criteria.