What Is Simulation Testing?

Simulation testing uses AI to generate realistic phone conversations between a virtual “customer” and your Phonely agent. These simulations behave like real calls, including intent changes, interruptions, topic variations, and unexpected questions. Simulation testing helps you:- Validate workflows before going live.

- Detect misconfigurations, routing errors, and dead-ends.

- Test knowledge base accuracy.

- Stress-test prompts and guardrails.

- Preview how your agent handles different tones and behaviors.

- Ensure your agent behaves consistently after updates.

Where to Access Simulation Testing

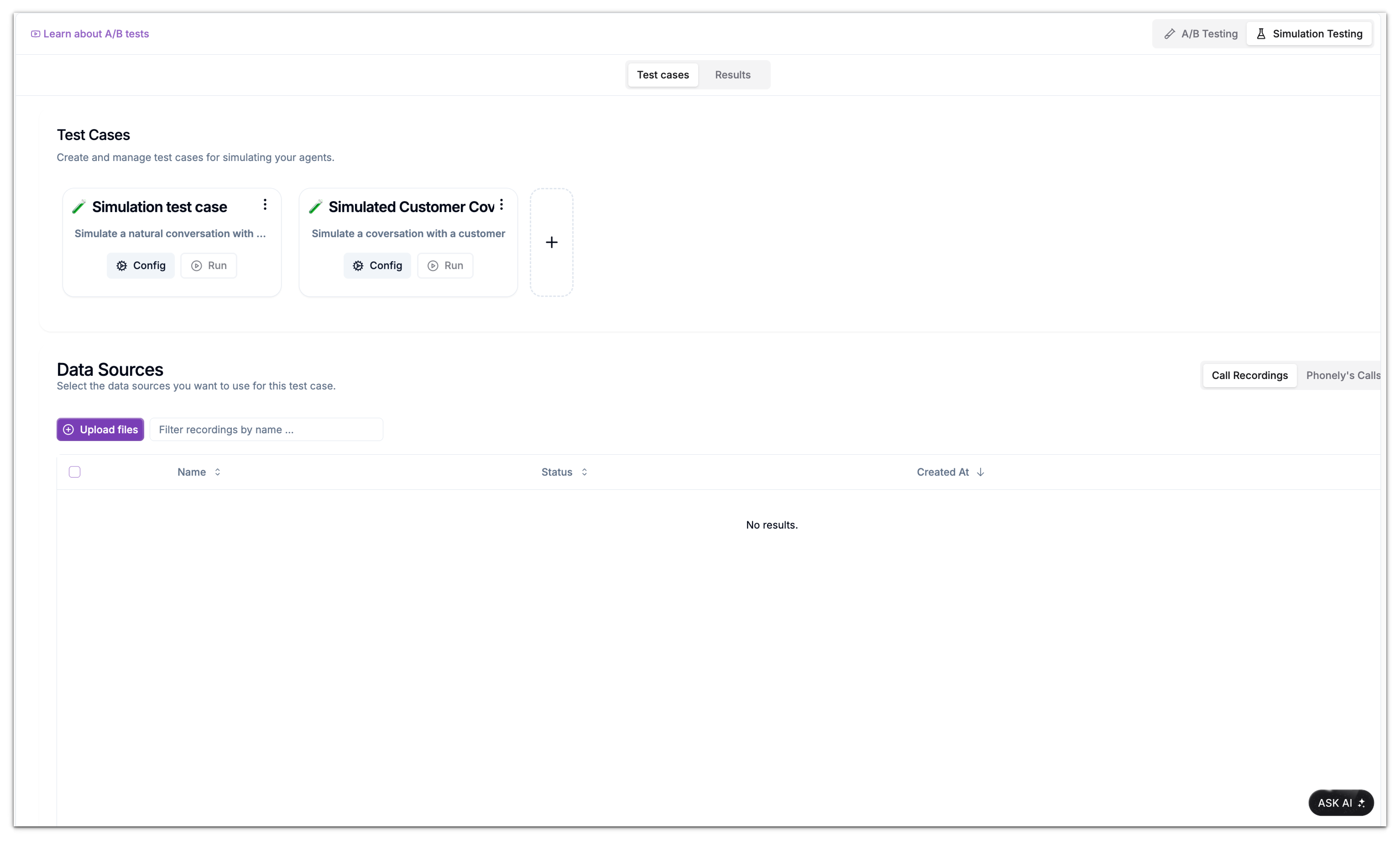

To open the simulation workspace:You will see two sub-tabs:

- Test Cases – Create and configure simulations.

- Results – Review past simulation outputs.

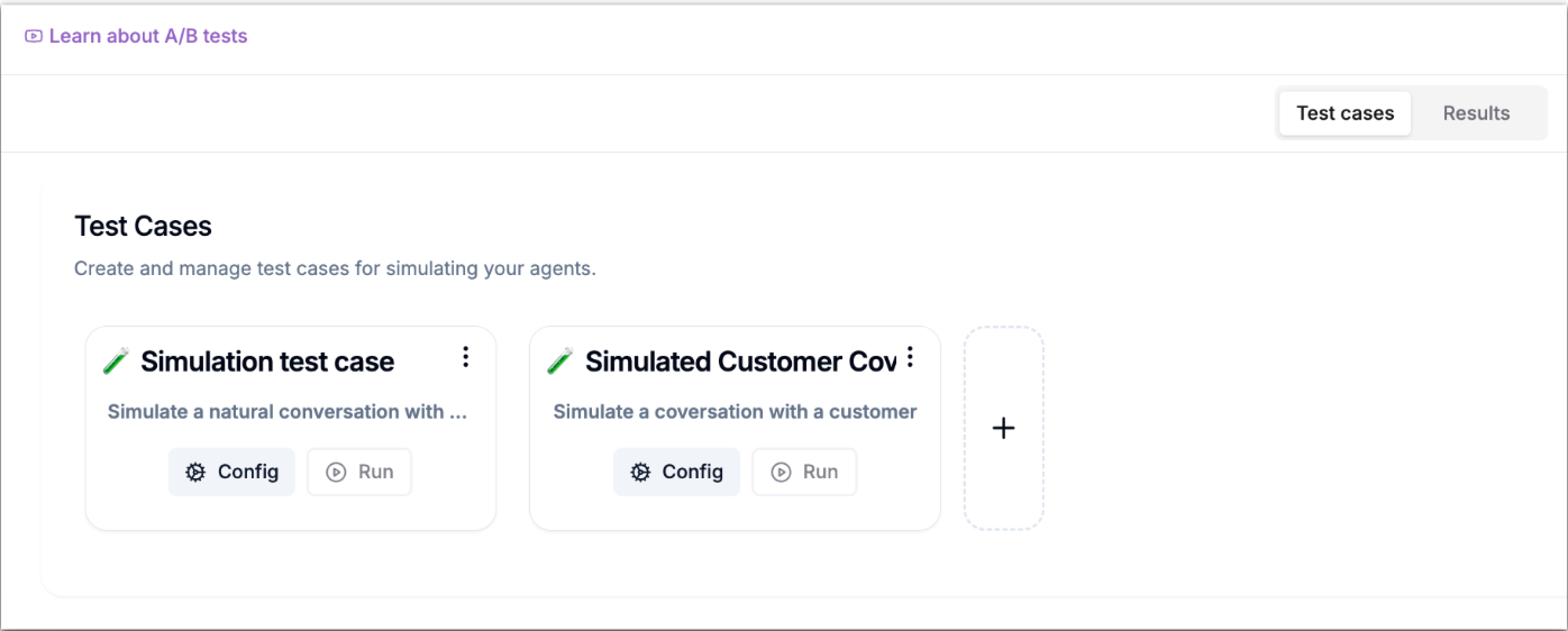

Test Cases Overview

In the Test Cases area, you can manage every simulation you create. Each test case card includes two key actions: Config and Run. Clicking Config opens the editor, where you can update the case name, description, number of test instances, and the randomness (variance) of the generated conversations. You can fine-tune how the simulated caller behaves before running the test. The Run button immediately triggers the simulation using the settings you’ve configured.

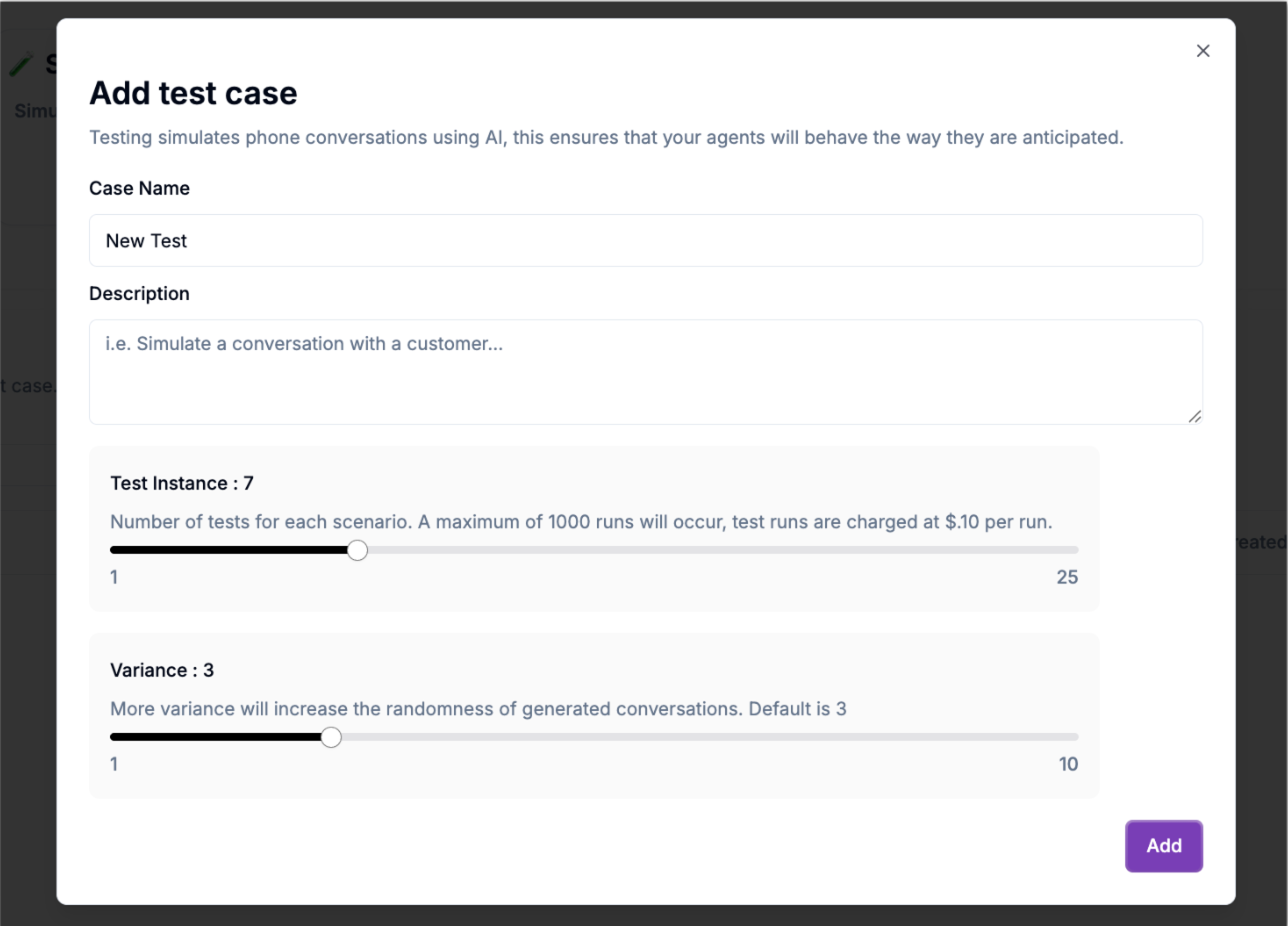

How to Create a Simulation Test Case

Follow these steps to build your first simulation:Configure Test Parameters

Two sliders allow you to define the nature and quantity of simulations:Test Instance

Defines how many conversations to generate.- Range: 1–25 per run

- Up to 1000 total runs (billed at $0.10 per run)

Variance

Controls the randomness of the simulated customer behavior. 1 = Low Variance: Conversations stay similar across runs 10 = High Variance: Conversations differ dramatically (ideal for stress testing) Higher variance helps reveal weaknesses in:- Prompts

- Routing

- Tone handling

- Unexpected customer questions

Working With Data Sources

Data sources determine where the “customer side” of the simulated conversation comes from.You can attach any of the following:

- Call Recordings

- Phonely’s Calls (past real calls)

- AI Evaluators

Call Recordings

Call Recordings allow you to upload audio files that represent real customer speech.This is useful when you want the AI to react to real-world phrasing, accents, pacing, or emotional tone. When you choose Call Recordings, Phonely displays an empty table until you upload something. To upload:

- Click Upload Files

- Enter a recording name (e.g., “Customer refund call”)

- Choose Inbound or Outbound

- Drag or browse to add audio files

- Press Upload

Phonely’s Calls

This tab displays recordings from actual calls your agent has already handled inside Phonely. It is extremely helpful for:- regression testing

- verifying bug fixes

- comparing old vs new agent behavior

AI Evaluators

AI Evaluators are the most flexible data source. Instead of using audio recordings, an evaluator acts as the customer during simulation. To create one:Choose a conversation style

Such as Casual, Angry, Frustrated, Happy, Distracted, Sad, or Demanding

Write detailed instructions, this defines how the evaluator behaves

Example: “Act like a customer trying to book a flight but constantly changing their mind.”

Define the success criteria

Example: “Success means the agent confirms date, destination, and seat type.”